Why CARF-Accredited Organizations Should Implement Measurement-Based Care Now

- How Does the CARF Accreditation Process Work?

- Understanding the Key Standards: 2.A.12, 1.M, and 1.N

- Why Measurement-Based Care Is the Foundation of CARF’s 2026 Accreditation Changes

- A CARF-Aligned Performance Reporting Framework

- How Greenspace Supports CARF-Aligned Performance Measurement End to End

- Final Thoughts

Last year, we published an article exploring CARF’s updated 2025 standards, which formally require organizations to engage in Measurement-Informed Care (also referred to as Measurement-Based Care, or MBC). We also hosted a live panel with both CARF and industry leaders to unpack the intent behind CARF’s accreditation changes, namely, to raise the bar for quality, accountability, and outcomes in mental healthcare.

For many organizations, the introduction of MIC/MBC into the standards served as a helpful catalyst to begin implementing consistent outcome measurement throughout the care process. For others, adoption has lagged due to staffing capacity constraints, competing operational priorities, budget limitations, or a sense that accreditation timelines are long enough to postpone action.

Preparing early helps organizations maintain continuity in their accreditation outcomes and avoid unnecessary disruption during the review process. In worst-case scenarios, organizations may lose accreditation status altogether. A loss or downgrade in accreditation can impact organizational credibility, payer and funder relationships, and referral pipelines, making proactive preparation essential.

What is important to recognize is that MBC supports far more than a single accreditation requirement. When implemented well, MBC becomes a foundational capability that helps organizations meet multiple CARF standards, strengthen performance improvement plans, enhance funding and grant advocacy, and most importantly, deliver a continuously improving standard of care.

For CARF-accredited organizations approaching renewal, or those considering accreditation for the first time, this article dives into how CARF accreditation works, the key measurement standards, and why now is the right time to implement MBC.

First: How Does the CARF Accreditation Process Work?

The process begins with the provider or service organization performing an internal evaluation of treatment and operational practices based on the CARF standards. These standards have been collectively developed and refined over the last five decades by health care professionals, payers, customers, and patients.

Once a provider is satisfied that their facility has met the CARF standards in their respective field, they must request an on-site CARF survey. Organizations are required to comply with CARF standards for six months before the date of the survey. CARF selects a survey team to reflect the organization that is being evaluated, assigning industry professionals with expertise in the fields and services relevant to the provider being surveyed. Their surveys include:

- Interviews with staff, patients and the families of patients

- Review of clinical and operational documentation

- Observation of the facility’s practices and service delivery

- Fielding questions for clarification

- Feedback and recommendations to strengthen processes and operations

Once the survey is complete, CARF determines if the facility demonstrates sufficient compliance with its standards. If the facility does, the organization earns a CARF accreditation. A report is also created that identifies the provider’s strengths, the areas where the provider can make improvements, and the degree to which the provider complies with CARF standards.

If an organization is awarded accreditation, it must submit a Quality Improvement Plan (QIP) to CARF that presents how it will address any areas that need improvement. In addition, the provider is also required to submit an Annual Conformance to Quality Report for each year of their accreditation term.

Understanding the Key Standards: 2.A.12, 1.M, and 1.N

CARF accreditation is an ongoing commitment to quality, accountability, and improvement.

Most organizations receive either a one-year or three-year accreditation, depending on how well they meet the standards at the time of survey. Between surveys, organizations are expected to maintain compliance, monitor performance, and demonstrate continuous improvement. When renewal approaches, surveyors look not only at whether standards are technically met, but at whether systems are embedded, repeatable, and actively used.

CARF’s 2025 standards reinforce their prioritization of clinically valuable measurement throughout care by emphasizing:

- Real-time use of data at the individual client level

- Organization-wide performance measurement and management

- A formal, documented process for continuous performance improvement

This is where Sections 2.A.12, 1.M, and 1.N come together.

Standard 2.A.12: Measurement-Informed / Measurement-Based Care

This standard focuses on care at the individual client level. Organizations are required to collect feedback from clients, such as symptom scales, functional measures, or progress indicators, and use that information to inform care decisions in real time.

Aligned with the foundational components of Measurement-Based Care, organizations can meet this standard by ensuring that:

- Measures are administered consistently throughout the course of care

- Results are available to clinicians during and between sessions

- Data is used collaboratively to inform and adjust treatment plans and clinical discussions

In order to meet these standards, measurement must be embedded within the day-to-day clinical workflow. This ensures organizations can consistently collect sufficient data and leverage the resulting insights to meaningfully inform treatment decisions and clinical discussions.

In the following clip, Michael Johnson, Senior Managing Director of Behavioral Health at CARF, breaks down Standard 2.A.12 for Measurement-Informed / Measurement-Based Care.

Section 1.M: Performance Measurement and Management

Section 1.M expands the lens from individual care to organizational performance. Organizations are expected to define, collect, and analyze performance indicators across both:

- Service delivery (e.g., outcomes, access, engagement, satisfaction)

- Business and operational functions (e.g., staffing, training, retention, risk management, financial sustainability)

CARF expects organizations to define meaningful performance indicators, set targets or benchmarks, ensure data quality (including reliability, validity, and completeness), and use data to understand how the organization is performing over time and where improvement is needed. MBC supports this requirement by providing high-quality, readily available data on outcomes, engagement, and satisfaction with care.

This is where performance reporting becomes critical. Leadership teams, boards, and quality committees should be able to review structured, repeatable reports that clearly show performance trends and areas for improvement.

CARF’s Six Steps to Building a Performance Management System workbook reinforces these expectations and provides a clear framework for implementation.

Section 1.N: Performance Improvement

Section 1.N builds on performance measurement by requiring organizations to demonstrate a formal, ongoing performance improvement process. This includes:

- Identifying trends or gaps in performance

- Setting clear improvement goals

- Implementing targeted changes

- Tracking whether those changes lead to improvement over time

Surveyors look for evidence that data is being regularly reviewed, discussed, and used to inform decisions. This is where many organizations encounter challenges, particularly when data is spread across disconnected systems or is difficult to interpret at scale.

Why Measurement-Based Care Is the Foundation of CARF’s 2026 Accreditation Changes

When implemented thoughtfully and consistently, MBC becomes the unifying mechanism across CARF’s updated accreditation standards.

1. MBC Directly Supports the MIC/MBC Requirement (2.A.12)

MBC provides a structured, evidence-based approach to collecting client-reported data (PROMs) and using it to inform clinical decision-making. When measures are administered at regular intervals and reviewed regularly, clinicians can:

- Identify when progress stalls or worsens

- Adjust care collaboratively with clients

- Document responsiveness to client needs

This directly aligns with CARF’s expectations for individualized, data-informed care. In the clip below, Michael Johnson emphasizes how the new standard for MBC / MIC reinforces CARF’s commitment to individualized, person-centered and high-quality care:

2. MBC Strengthens Organizational Performance Measurement (1.M)

Individual-level data becomes far more useful when aggregated across an organization. When outcome data is collected consistently across clinicians, programs, and populations, organizations can:

- Track improvement trends across cohorts

- Compare outcomes across programs or service lines

- Monitor engagement, dropout, and access patterns

- Include meaningful clinical indicators in performance reports

Rather than relying solely on volume or process metrics (such as number of sessions delivered), organizations can report on the measurable impact of their services, which aligns with current accreditation expectations.

3. MBC Enables Continuous Performance Improvement (1.N)

As mentioned above, Section 1.N is where CARF evaluates whether measurement is actively used to drive clinical improvement. Surveyors are not assessing isolated improvement projects or anecdotal examples. They are looking for evidence of a repeatable, data-informed improvement cycle that is actively used across the organization.

MBC produces longitudinal, client-level outcome data, which is exactly what continuous improvement requires. When implemented consistently, MBC allows organizations to detect patterns over time, understand where care is working, where it’s not, and ultimately test treatment approach adjustments by evaluating their impact using real outcome data.

When MBC is embedded in routine clinical workflows, performance improvement follows a clear and demonstrable cycle:

- Review performance data: Leadership and quality teams review aggregated performance reports that include clinical outcomes, client engagement, access timelines, and retention.

- Identify priority gaps or risks: For example, clients in a specific program might be improving more slowly, certain populations show higher dropout rates, or time to meaningful improvement exceeds expected standards.

- Develop and implement targeted actions: Actions might include adjusting clinical workflows, introducing additional supports (e.g., peer services, wrap around supports outside of sessions), modifying intake or assessment processes, providing targeted training or supervision, or other strategies that target the identified areas for improvement.

- Monitor impact over time: Outcome dashboards and reports are used to determine whether changes resulted in the desired outcomes. This could include faster or greater symptom improvement, improved engagement or retention, or reduced deterioration or off-track clients.

- Refine or scale successful changes: Effective interventions are sustained or expanded, and ineffective ones are revised to promote continuous improvement.

4. MBC Supports Stronger Performance Plans and Reporting

CARF expects organizations to maintain documented performance measurement and improvement plans. With the right MBC infrastructure in place, organizations can:

- Define outcome-based performance indicators

- Populate reports automatically with real-time data

- Set and monitor targets over time

- Provide clear evidence of improvement efforts during surveys

This reduces reliance on manual spreadsheets, retrospective data pulls, and last-minute reporting, which can be common pain points during accreditation renewal cycles.

A CARF-Aligned Performance Reporting Framework

CARF does not prescribe a single report or format. Instead, they expect organizations to maintain a repeatable, organization-wide performance management process that connects data to decision-making and improvement.

In practice, an effective performance reporting framework follows a defined lifecycle:

Data collection → Aggregation → Reporting → Interpretation → Action → Reassessment

- Data collection: Organizations collect data across multiple domains, including, clinical and service delivery data (e.g., client-reported outcomes, engagement, access), operational and workforce data (e.g., training, staffing, retention), experience and satisfaction data, risk and safety indicators, financial and efficiency metrics. MBC plays a central role by ensuring that client and org-level clinical outcome data is collected consistently as part of routine care, using validated measures.

- Aggregation and normalization: Individual data points are then aggregated across clinicians, programs or service lines, populations or demographic groups, and defined time periods (such as monthly, quarterly, annually). This step allows organizations to identify and review patterns and trends to inform quality improvement planning, which is essential for meeting the expectations outlined in Sections 1.M and 1.N.

- Dashboards and performance reports: Aggregated data is presented through structured reports and dashboards that leadership and quality teams review on a regular basis. Common performance report components include the following:

- Clinical outcome trends: Average symptoms or functioning change over time, percentage of clients showing meaningful improvement, time to improvement across programs or cohorts

- Access and throughput metrics: Time from referral to first appointment, drop-off between intake and treatment start

- Engagement and retention: Attendance and no-show rates, episode completion or early termination

- Client experience and satisfaction

- Workforce indicators: Training completion, caseload distribution, staff turnover

- Efficiency and sustainability metrics: Service utilization, resource allocation

- Interpretation and leadership review: CARF expects organizations to demonstrate that performance data is reviewed on a regular basis, interpreted by leadership and quality committees, and used to assess what is working and where gaps exist. This process commonly occurs through monthly or quarterly leadership meetings and regular board-level reporting, and may be supported by quality improvement committees or teams.

- Improvement action and reassessment: Finally, performance insights must lead to deliberate action. Organizations must identify priority improvement areas, set clear improvement goals or targets, implement defined changes, and regularly reassess whether those changes resulted in improvement. This closed-loop process is central to Section 1.N (Performance Improvement) and a primary focus during accreditation surveys.

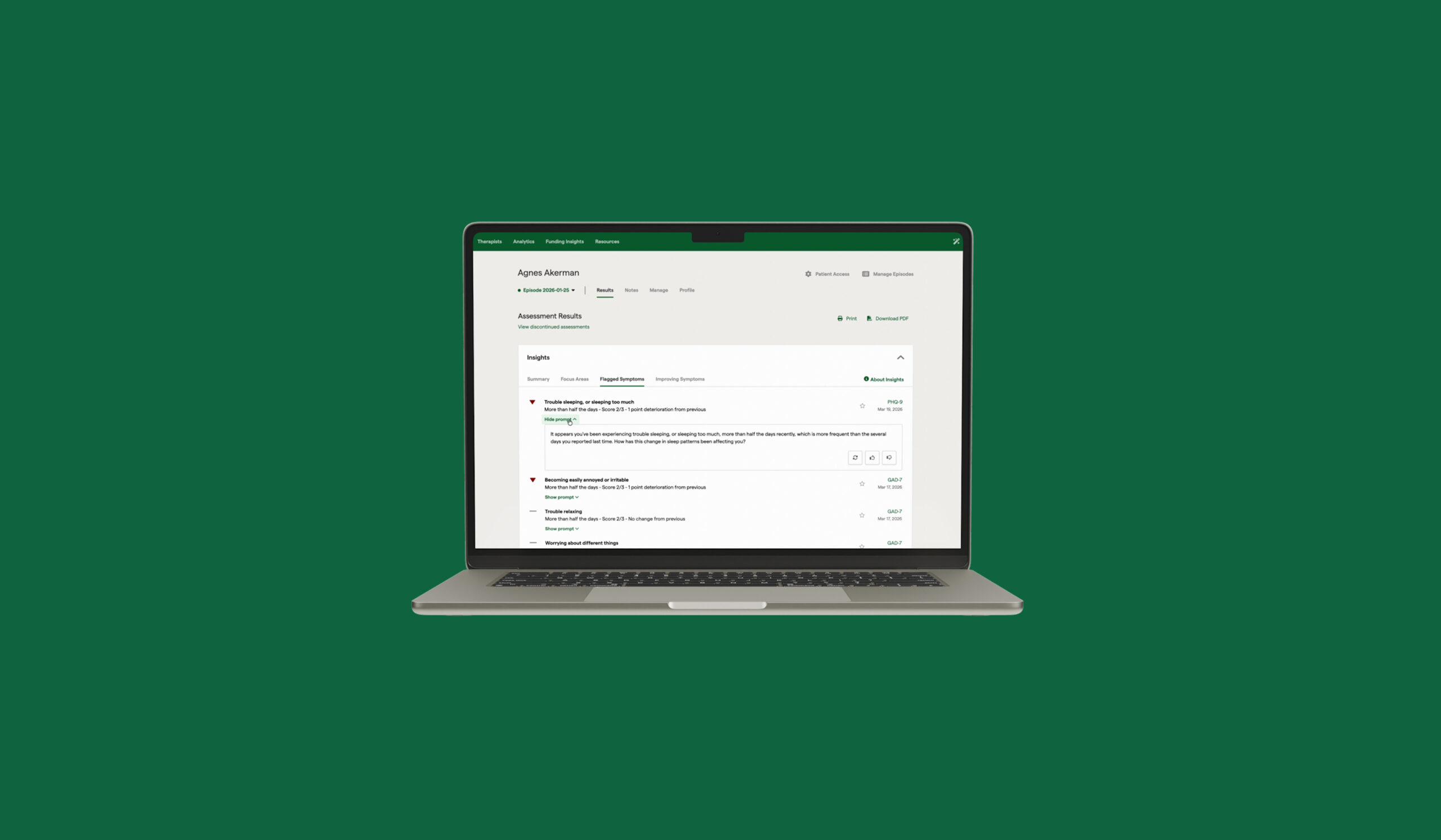

How Greenspace Supports CARF-Aligned Performance Measurement End to End

Greenspace Health is designed to support the full lifecycle of CARF-aligned performance measurement and improvement. The below table illustrates how MBC is operationalized across clinical, organizational, and accreditation workflows and requirements.

| Capability Area | How Greenspace Supports Organizations | CARF Alignment |

| Clinical workflow support |

|

2.1.12 (MIC / MBC) |

| Aggregated performance reporting |

|

1.M (Performance Measurement |

| Continuous improvement enablement |

|

1.N (Performance Improvement) |

| Data integration |

|

1.M & 1.N |

| Accreditation readiness |

|

2.A.12, 1.M, 1.N |

| Flexibility and customization |

|

Organization-specific configuration and reporting |

Accreditation May Initiate Measurement-Based Care (MBC) Adoption, but Clinical Value Sustains It

While accreditation requirements may spark initial interest, compliance alone does not sustain clinician engagement over time. Clinicians are far more likely to adopt and consistently use measurement when the process is integrated into their existing workflows, the data is relevant to their day-to-day clinical decisions, and results are easy to interpret. This foundation allows measurement to support more effective clinical discussions, treatment decisions and quality improvement without adding administrative burden.

Organizations that implement MBC solely to meet accreditation requirements may struggle with clinician adoption and data quality. Organizations that prioritize clinical value first see stronger engagement, better outcomes, and more reliable data, with accreditation readiness being a natural byproduct of a high-quality MBC implementation.

The ROI of Measurement-Based Care Goes Beyond Accreditation

Finally, MBC delivers value well beyond accreditation. Through improved client engagement and retention, earlier identification of non-response, at-risk or off-track clients, and more efficient use of clinical resources, organizations can continuously strengthen care delivery and provide clear evidence of their care quality and impact for payer, funder, and stakeholder discussions.

As expectations continue to shift towards outcomes, accountability, and value-based care, these capabilities position organizations for long-term success and sustainability.

Final Thoughts

CARF accreditation may prompt organizations to focus on Measurement-Based Care (MBC), but it should not be the sole driver. MBC is most effective when it’s embedded into clinical workflows and used to inform care, supervision, and performance improvement.

As mental health accreditation and payment models shift toward greater transparency, outcomes accountability, and value-based expectations, organizations need flexible measurement technology that supports both high-quality care delivery and organizational decision-making.

Organizations that implement MBC with a focus on clinical value and quality improvement will be best prepared for the 2026 CARF updates and better equipped to meet the growing expectations around outcomes and accountability.

To discuss how Measurement-Based Care can support your organization’s clinical practice, performance improvement, and CARF-readiness, reach out anytime to schedule a discussion with one of our MBC experts.